Key Takeaways

Verifiable AI is the idea that AI outputs, model behavior, or content provenance should come with evidence, not just assertions.

In practice, Verifiable AI can include content provenance, watermarking, cryptographic signatures, trusted execution environments (TEEs), and zero-knowledge proof or other proof systems for inference.

The concept matters especially in crypto because smart contracts cannot safely rely on opaque offchain AI outputs without some trust-minimizing verification layer.

As of April 2026, the space includes emerging infrastructure narratives from projects and protocols such as Chainlink, Lagrange, and 0G, alongside broader provenance standards such as C2PA Content Credentials and media watermarking tools like SynthID.

Verifiable AI is promising, but it is still early. There is no single universal standard yet, and different systems verify different things.

Artificial intelligence is becoming more powerful, but it is also becoming harder to trust.

When an AI model summarizes a report, flags a transaction, prices an asset, triggers an onchain action, or generates an image, users usually have to trust that the system did what it claimed to do. In many cases, they cannot independently verify which model produced the output, what data it used, whether the output was altered, or whether the model ran inside an approved environment. That trust gap is exactly what Verifiable AI is trying to solve. OpenAI has described verifiability in AI as the ability to provide evidence for claims about AI systems, while NIST has emphasized techniques such as watermarking, cryptographic signatures, digital fingerprints, and model integrity verification as ways to support content provenance and trustworthy AI systems.

At a high level, Verifiable AI refers to methods that make AI systems more auditable, provable, and tamper-evident. Depending on the use case, that can mean proving the provenance of AI-generated content, proving that a specific model ran as claimed, proving that inputs and outputs were not changed, or proving that an offchain AI computation can be trusted by an onchain application. Chainlink’s recent description of the “verifiable AI stack” captures this well: it combines AI with cryptographic proofs and blockchain infrastructure so smart contracts can consume AI outputs while gaining integrity guarantees about offchain execution.

In other words, Verifiable AI is not one product or one token. It is a broader design philosophy: don’t just ask users to trust an AI system—give them evidence.

What Does “Verifiable AI” Actually Mean?

The term can sound abstract, so it helps to break it into a simple question:

What exactly are we verifying?

That could mean several different things:

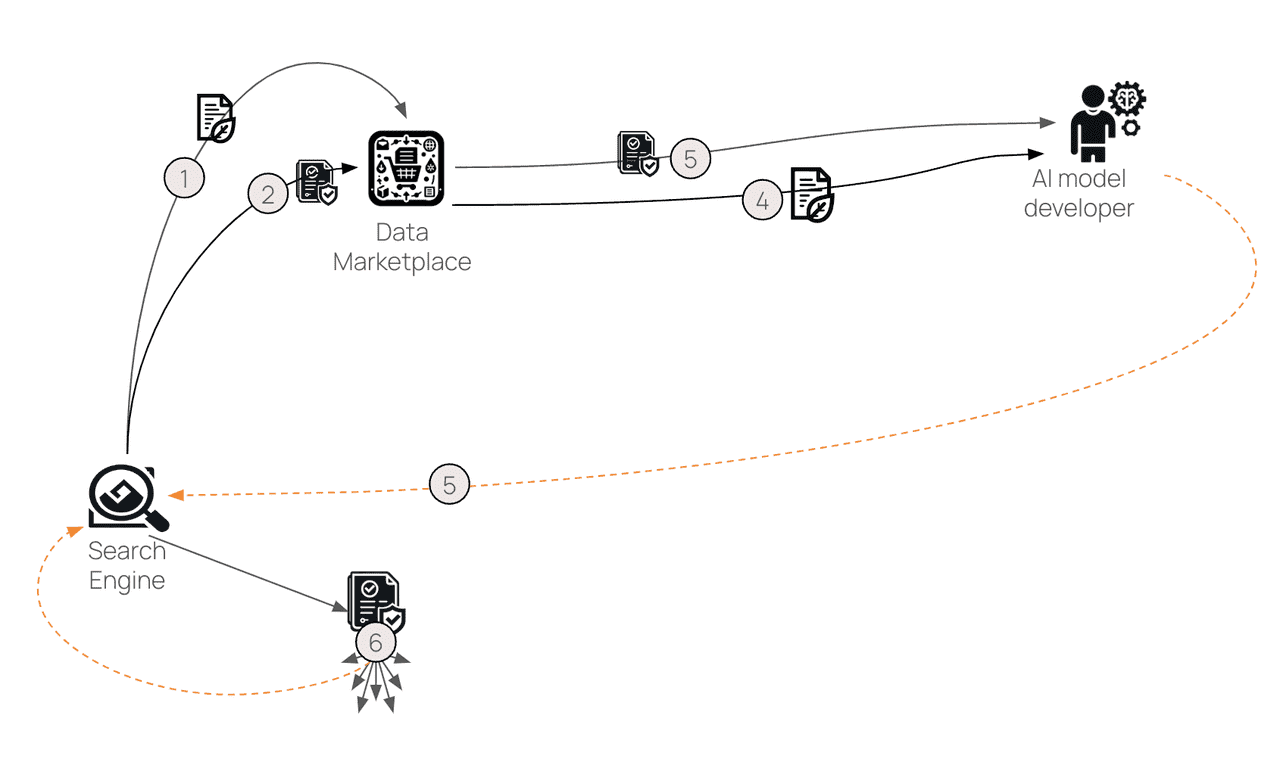

Verifying provenance — Was this image, video, audio clip, or document created or edited by AI, and what is its history? C2PA’s Content Credentials standard is built around this idea, describing Content Credentials as a way to establish provenance for digital content and securely bind statements about how content was created or modified.

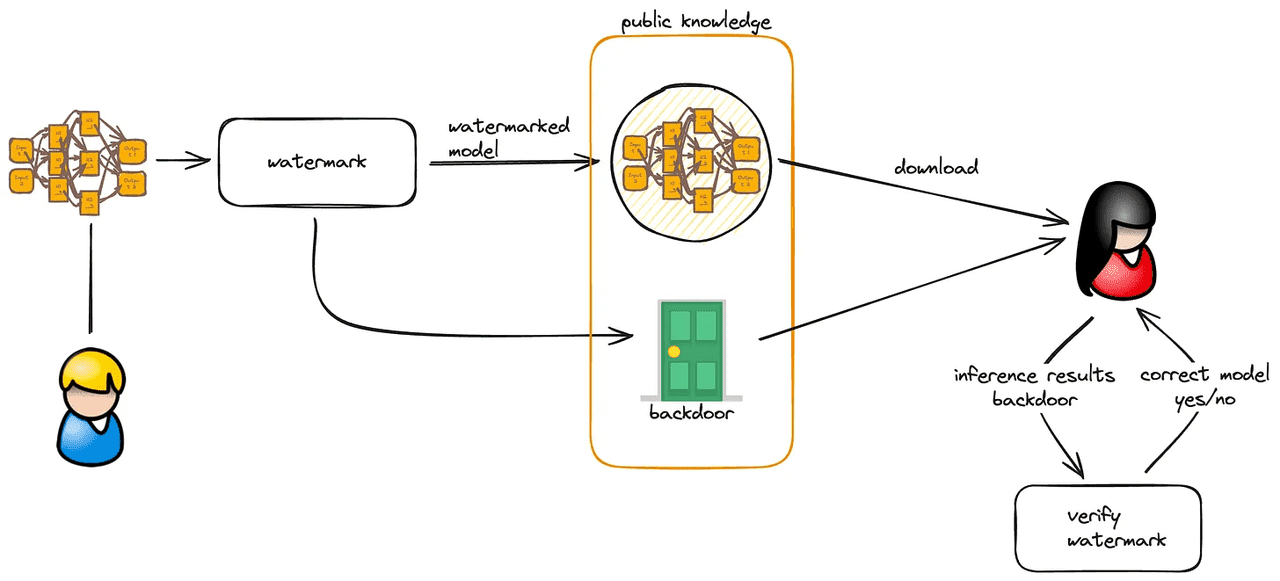

Verifying generation — Was this content produced by an approved AI system? Google DeepMind’s SynthID is one example of a watermarking approach designed to identify AI-generated or AI-altered content.

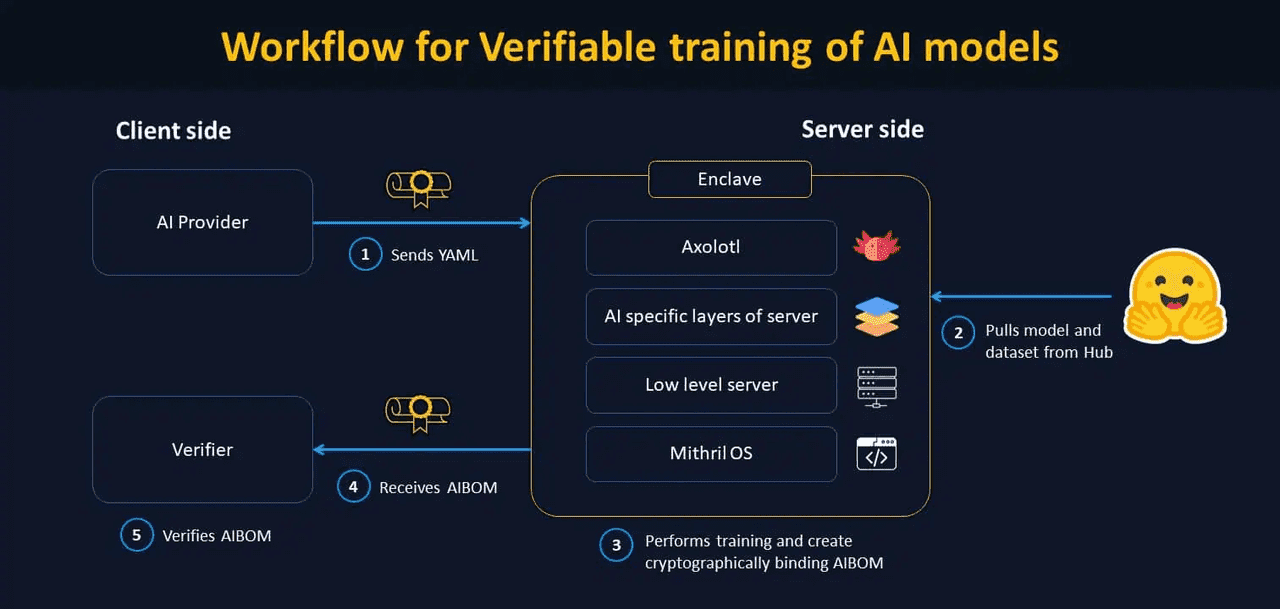

Verifying execution — Did the AI model actually run as claimed, on the intended inputs, in the intended environment, without tampering? This is where TEEs, attestation systems, and proof-based inference systems come in. Chainlink’s education materials and recent Verifiable AI write-up explicitly connect this problem to secure model execution and trusted computation.

Verifying computation for blockchains — Can a smart contract safely act on an AI output if the model ran offchain? Because blockchains cannot directly inspect opaque offchain inference, verifiable compute and proof layers become essential if AI is going to trigger high-value onchain actions.

So when someone says “Verifiable AI,” they may mean one or more of these layers at once. That is why the category is broad: one team may focus on authenticating media, another on proving inference correctness, and another on making AI agents auditable in onchain workflows.

Why Verifiable AI Matters

AI is useful precisely because it can act where humans cannot manually check everything. But that creates a problem: if the output is important, the need for verification increases.

Imagine these examples:

An AI model flags suspicious transactions for a regulated financial platform.

An AI oracle feeds event analysis into a prediction market.

An autonomous AI agent rebalances an onchain portfolio.

A generated image or video goes viral during a market-moving news event.

A business wants proof that a model followed certain data-use or compliance constraints.

In all of those cases, trust cannot rely on “the model said so.” NIST’s guidance on synthetic content risk reduction highlights watermarking and content authentication as tools for provenance and integrity, while OpenAI’s work on improving verifiability in AI development frames verifiability as a way to provide evidence that AI systems are safe, secure, fair, or privacy-preserving.

Crypto makes this issue even sharper. Blockchains are designed to minimize trust, yet AI systems are often opaque, offchain, and difficult to audit. That is a natural mismatch. Chainlink’s recent materials make this point directly: verifiable AI and AI-agent orchestration are needed so offchain data processing and model-driven workflows can be reported back onchain with transparency, auditability, and policy compliance.

That is why Verifiable AI is becoming one of the more important sub-narratives inside AI x crypto. It tries to bridge the gap between intelligent systems and trust-minimized systems.

Verifiable Workflow for Training AI (source)

The Main Building Blocks of Verifiable AI

Provenance and Content Credentials

One of the simplest forms of Verifiable AI is proving where content came from.

The C2PA framework is one of the leading standards here. Its explainer says Content Credentials provide a way to establish provenance of content and securely bind provenance statements to media. The group’s website describes Content Credentials as a kind of “nutrition label” for digital content that lets people inspect its history.

This does not magically tell users whether content is “true,” but it does help answer questions such as:

Who created it?

Was AI involved?

Was it edited?

What software touched it?

Has the provenance data been preserved?

That matters for journalism, brands, legal evidence, and any environment where fake or manipulated media can cause real harm. It also matters for crypto, where fake screenshots, fake documents, and AI-generated misinformation can move markets fast.

Watermarking

Another layer is watermarking: embedding signals into AI-generated media so it can later be identified.

Google DeepMind’s SynthID is one of the clearest examples. DeepMind describes it as a tool to watermark and identify AI-generated content, designed to help foster transparency and trust in generative AI. For images, the system embeds an imperceptible watermark directly into pixels; DeepMind has also described audio watermarking approaches for generated music and sound.

Watermarking is useful, but it is not a complete solution. NIST’s synthetic content guidance notes that provenance and watermarking can help authentication, but they need to be evaluated for reliability, robustness, and resistance to removal or manipulation.

So watermarking is best understood as one piece of Verifiable AI—not the whole thing.

Trusted Execution Environments (TEEs)

Another approach is to verify where and how a model ran.

TEEs are hardware-isolated environments that can help prove a computation happened inside a protected system. Chainlink’s explainer on TEEs in blockchain says they are especially relevant for AI because model weights and input data may be proprietary or sensitive, and TEEs can provide secure environments for verifiable AI while preserving confidentiality.

This is a big deal in decentralized compute networks. If developers are outsourcing inference to third-party machines, they need some confidence that the machine ran the correct model, did not expose the data, and did not fabricate the output. TEEs are one answer—though not a perfect one—because they provide attestation about the runtime environment.

Cryptographic Proofs of Inference

A stronger but harder approach is to prove the AI computation itself.

This is where zero-knowledge machine learning, verifiable inference, and proof-based systems come in. A 2025 survey on ZKP-based verifiable machine learning describes a broad research field focused on verifying training, inference, or testing without revealing sensitive information. More recently, a March 2026 paper proposed lightweight cryptographic proofs of inference that aim to reduce the heavy overhead of traditional proof systems for large models.

This matters because the holy grail of Verifiable AI is not merely “trust this secure box,” but “here is cryptographic evidence that the computation happened correctly.” In practice, that remains difficult for large models because proofs can be computationally expensive. But the research direction is clear: making AI inference more provable without making it unusably slow.

Example of Use Case (source)

What Verifiable AI Means in Crypto

In traditional software, trust often comes from reputation, contracts, and centralized oversight. In crypto, the bar is different: users expect systems to be open, composable, and independently auditable.

That creates a tension. AI is increasingly useful for trading, automation, compliance, and prediction, but the output of a black-box model is hard to reconcile with trust-minimized infrastructure. Chainlink’s recent articles on AI orchestration and the verifiable AI stack argue that this is exactly where decentralized oracle networks, consensus-based reporting, and verifiable compute can play a role. Inputs can be fetched and processed across decentralized infrastructure, then reported back onchain in a way that reduces reliance on any single operator.

This is especially relevant for:

AI oracles for prediction markets, insurance, or event-driven protocols

Autonomous trading agents that need trusted data and auditable actions

Compliance workflows where institutions need proof of policy enforcement

Onchain consumer apps that want to use AI without fully centralizing trust

Put simply, Verifiable AI is what makes “AI on blockchain” more than just a marketing phrase.

Examples of Verifiable AI Narratives in the Market

Chainlink: The Verifiable AI Stack

Chainlink has been one of the clearest voices framing Verifiable AI as a stack that combines AI, cryptographic proofs, and blockchain infrastructure. Its recent materials connect this directly to smart contracts, TEEs, agent orchestration, institutional smart contracts, and verifiable offchain computation.

For Academy readers, the important point is not just “Chainlink is doing AI,” but that it is articulating a framework for how AI outputs become trustworthy enough for onchain use.

Lagrange: ZK-Powered Verifiable AI

Lagrange’s official docs explicitly position the project around verifiable technology and say its DeepProve product is for verifiable AI. The project’s framing is direct: “The future of AI is ZK.” That places Lagrange firmly in the camp that believes zero-knowledge proof systems will be core infrastructure for trusted AI computation.

0G: Infrastructure for Decentralized AI

0G’s documentation is broader than just verifiability, but its ecosystem positioning is closely tied to decentralized AI infrastructure, including decentralized inference, storage, and data availability. In public materials around the ecosystem, 0G has also leaned into “verifiable AI” as part of its infrastructure narrative. Its docs show how developers can access inference services and how the network handles data availability and verification through specialized nodes.

These examples matter because they show that Verifiable AI is not just an academic term. It is becoming a live product and infrastructure narrative across the crypto market.

How Verifiable AI Could Work in Practice

A useful way to think about Verifiable AI is as a chain of evidence.

For example, imagine an onchain AI-powered insurance product:

A user submits a claim with images and documents.

A model analyzes the claim offchain.

The system records provenance for the submitted and generated media.

The model runs inside a TEE or another attested environment.

A decentralized network verifies inputs or aggregates model outputs.

The result is signed, logged, and reported onchain.

The smart contract only acts if the required evidence checks pass.

Different systems will implement different parts of this stack, but the idea is the same: make every critical step more inspectable and harder to fake. Chainlink’s recent materials on institutional smart contracts and AI orchestration map closely to this kind of architecture, while C2PA and watermarking systems address the media and provenance side.

Role of Data in Verifiable AI (source)

Benefits of Verifiable AI

The biggest benefit is obvious: more trust. But that trust breaks down into several specific gains.

First, Verifiable AI can improve auditability. Instead of asking users to trust a model provider’s statement, systems can provide artifacts such as credentials, signatures, attestations, or proofs.

Second, it can improve compliance readiness. Chainlink’s recent materials on compliance AI and institutional smart contracts show why regulated finance cares: policy enforcement, reporting, and audit trails become easier when the infrastructure is designed to prove what happened.

Third, it can improve resilience against manipulation. Provenance systems and watermarking will not stop all deepfakes or misinformation, but they can make tampering more detectable and provide stronger authenticity signals.

Fourth, it can improve composability for Web3. Smart contracts, agents, and protocols can use AI more safely when they can inspect the evidence attached to an output instead of blindly trusting it.

Limitations and Risks

Verifiable AI is promising, but it is not magic.

One major limitation is that different methods verify different things. A watermark can indicate that media was AI-generated, but it does not prove the underlying claim in the media is true. A provenance record can show edit history, but not whether the content is accurate. A TEE can attest to the environment, but not necessarily every property users care about. And proof systems for inference are still resource-intensive for many large-scale models.

Another challenge is standard fragmentation. C2PA is important for provenance, but it is not a universal answer for AI execution. ZK systems are advancing, but they are not yet a universal runtime layer for all AI applications. TEEs are practical, but they introduce their own trust assumptions around hardware and attestation.

There is also a cost and performance tradeoff. Stronger verification usually means more overhead. That is one reason current research keeps exploring lighter-weight proof systems and hybrid approaches rather than assuming every inference will soon be fully proven at low cost. Finally, there is a market risk in crypto: projects may use “Verifiable AI” as a narrative label even when their actual verification guarantees are narrow.

Conclusion

Verifiable AI is one of the most important ideas emerging from the collision between AI and crypto because it tackles a core weakness of modern AI systems: opacity.

As AI becomes more deeply embedded in financial apps, onchain agents, media systems, compliance workflows, and autonomous software, users will increasingly need more than model outputs. They will need proofs, provenance, attestations, and audit trails. That is the real promise of Verifiable AI.

The category is still early as of April 2026, and it does not yet have one universal architecture. Instead, it is developing as a stack: provenance standards such as C2PA, watermarking tools such as SynthID, trusted runtime methods such as TEEs, and more advanced proof systems for inference and onchain settlement. Projects and infrastructure providers like Chainlink, Lagrange, and 0G are helping push different parts of that stack forward.

The simplest way to understand the thesis is this: Verifiable AI is about turning AI from something you merely use into something you can inspect and trust with evidence. If that shift continues, it could become one of the foundational ideas behind the next generation of AI-powered crypto applications.

As AI and blockchain continue to converge, narratives like Verifiable AI could become increasingly important for traders and builders alike. For users looking to stay ahead of emerging crypto sectors from AI agents and DePIN to RWAs and onchain infrastructure, Phemex offers a secure and user-friendly platform to track market trends, explore new opportunities, and sharpen your trading edge.